Can Twitter/Facebook Help Fight Online Plagiarism?

Earlier this year, Matt Cutts, the head of Google’s Web spam team, posted a video to Google’s Webmaster Help channel on YouTube (embedded below) detailing a scenario that visitors of this site are probably all-too familiar with.

Earlier this year, Matt Cutts, the head of Google’s Web spam team, posted a video to Google’s Webmaster Help channel on YouTube (embedded below) detailing a scenario that visitors of this site are probably all-too familiar with.

Basically, in the setup, site A scrapes or otherwise lifts content from site B. However, due to Google’s crawling patterns, site A is spotted first or otherwise has more trust with Google and, as such is treated as the original.

In the video Cutts, who previously said that such a scenario was “highly unlikely”, admitted openly that Google is not perfect and it does make mistakes in this area though it is doing everything it can to avoid them.

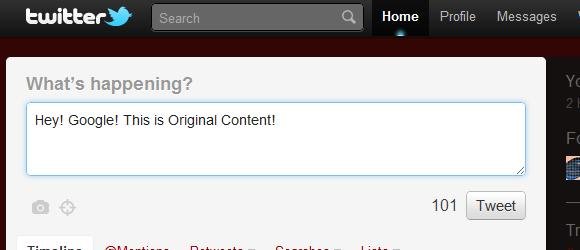

However, in addition to offering the typical Google solutions, including DMCA notices and spam reports, Cutts also listed two ways in which webmasters may be able to fight back against this kind of issue. This included “push” services and protocols, such as pubsubhubbub, but also Tweeting a link as soon as it is live.

The reason is that Google is. theoretically at least, constantly monitoring Twitter and the parts of Facebook it can access. Therefore, it’s likely that a link that appears there will be indexed by Google before it is crawled on a scraper or plagiarist site.

This makes plenty of sense because, though a spam or plagiarist site might be a higher PageRank than yours, it most likely won’t have more trust than Twitter and Facebook. As such, those updates will be spotted first and trusted more.

Unfortunately though, while all of this might have been true in April, when the video was made, it’s of much more dubious use now. The reason is that, in July, Google ended it’s real-time relationship with Twitter. As such, not only is Twitter not nearly as immediate with Google as it was, but supposedly the links are now nofollowed, meaning Google isn’t discovering new content from Twitter.

Facebook has similar issues as Twitter, including the nofollowing of links, but also in that much of Facebook is hidden from Google’s prying eyes.

So, while the technology is there and has been used previously to make Twitter a form of non-repudiation service for Google, independently verifying which links are original, it most likely isn’t very useful now. Still, it’s probably best to play it safe and continue as if nothing has changed as there may still be some benefits to tweeting out new content immediately and there certainly isn’t any harm.

In the end, it’s likely that tools like Pubsubhubbub, which push content directly from the site to the interested parties (including Google) that offer the long-term solution. The reason spammers and plagiarists are so easily able to outrank original creators is because of the quirks of a “pull” web. Switching more to a “push” model eliminates that problem.

However, according to Cutts, Google only places a small amount of credibility in such systems right now though it may expand its use of them in the near future. It will be interesting to see what impact they will have on spammers when and if they do.

Want to Reuse or Republish this Content?

If you want to feature this article in your site, classroom or elsewhere, just let us know! We usually grant permission within 24 hours.