Google Reader Now For Non-RSS Sites

Google Reader, the most popular reader for those who subscribe to this site, added a new feature on Monday that allowed users to “subscribe” to sites even if the site doesn’t have an RSS feed.

Google Reader does this by regularly checking the page for changes and reporting the new content in an RSS-like format.

While this isn’t of much concern to bloggers, who already offer an RSS feed that Google Reader will prefer, many sites have intentionally steered clear of using RSS feeds for various reasons and they may be deeply concerned.

With that in mind, here is a quick look at how the service works and what it might mean.

How it Works

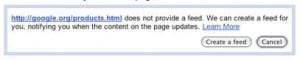

To use this feature, you open up Google Reader, click on their “Add a Subscription” button and input a URL that does not have a current RSS feed. Google will then look at the page, index it and check back periodically to see if there are any changes.

When Google Reader does detect a change, it will report it by providing a summary of the new text. This, hopefully, will tell you if the changes are worthwhile and then let you click through to the actual page itself to see the changes in context.

In short, it works in a very similar fashion to ChangeDetection.com but instead of emailing the results, it provides them in an RSS-style format. This list can be turned into an actual RSS feed through Google Reader by using the ability to subscribe to your own reading list.

For those who are interested, the original announcement, linked above, provides several examples of the feed in action, including Macy’s Special Offers page (new feed)

Why Some May Worry

At first blush, this sounds a lot like what Dapper was, a tool designed to make it easy to scrape content from static HTML pages that don’t provide RSS feeds. (Note: Dapper appears to be only doing dynamic advertising at this time).

Many who have shied away from RSS feeds have done so because they don’t want to see their content re-purposed on other sites and don’t feel that the value to visitors is greater than their own potential losses. However, service such as this one seem to force them to have an RSS feed by creating one for them against their will.

However, a closer examination of the feature shows that it really isn’t anything to worry about, at least in terms of content misuse.

Why Not to Worry

Though this feature sounds ominous, it is really not a major concern, at least in its current incarnation.

First, the snippets used in the RSS reader are extremely short, about 25 words in my tests, and the update seems to be fairly slow. In short, it isn’t useful for scraping, even with porting it into a true RSS feed.

Second, it seems unlikely that reposting content you’ve already passed through Google is a good idea. By doing this, you’re essentially ensuring that Google sees the source content first, making it less likely it would favor the duplicate version, though still not impossible.

Finally, the user has no control over what content is picked up. More advanced scraping technology is required to get an effective scrape of new content on a page and, considering it only works on one page, it isn’t likely to have much impact across the whole of a larger site.

All in all, page change detection systems, including ChangeDetection.com, have existed for quite some time with no perceivable negative impact on webmasters and it seems likely that this will be no different.

Bottom Line

If you are still worried about this, you can easily block it by preventing Google from archiving your site. Though I wish there were a way to block this specific activity without blocking all archiving, it seems to be a good way to handle it in the moment.

All in all though, there doesn’t appear to be much reason to worry about this particular service. Not only are the snippets too small and the update too slow, but Google itself is watching the content that passes through it. There are simply better and easier ways to scrape non-RSS content for reuse.

The bigger problem the feature has is that the uses for it are fairly narrow. Most sites with regular updates already have an RSS feature making it largely unneeded.

In the end, it is probably a feature that would have better served the Web 5 years ago, when RSS feeds were not so common.

Want to Reuse or Republish this Content?

If you want to feature this article in your site, classroom or elsewhere, just let us know! We usually grant permission within 24 hours.