The Limitation of Every Plagiarism Checker

When it comes to plagiarism, technology has been both a blessing and a curse. Though it has made it easier than ever to find and copy work from others without attribution, it’s also made it easier to track and handle plagiarism when it happens.

When it comes to plagiarism, technology has been both a blessing and a curse. Though it has made it easier than ever to find and copy work from others without attribution, it’s also made it easier to track and handle plagiarism when it happens.

With tools that can search billions of documents in seconds and can find matches only a few words in length, it might seem as if plagiarism would be as easily detected as finding information in Google. A matter of merely punching your query and going through the results.

Unfortunately, that isn’t the case.

Plagiarism detectors have a huge limitation and one that isn’t likely to go away any time soon. That limitation is, simply put, that plagiarism detectors can’t actually detect plagiarism and, instead, do something very different altogether.

How Plagiarism Detection Works

This problem might seem a bit odd to those unfamiliar with the technology. After all, dishwashers wash dishes and car starters start cars, but plagiarism detectors don’t actually detect plagiarism.

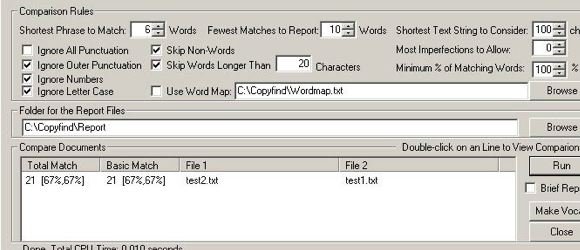

Instead, what they actually detect is sections of identical text. Though there is a variety of techniques for doing this, the end results are pretty much always the same. A plagiarism detection service looks for matching strings of words between the document its looking at and the ones it has in its index. This is true for a local plagiarism checker, such as WCopyFind, search engine-based systems such as Copyscape and Plagium and high-end system such as Turnitin.

They all work on the same principle and basically function much like we would expect Google or another search engine to work, finding the words we want in other sources and providing the best results it can.

While this makes them powerful tools, doing the same comparison by hand would be impossible given all of the sources these tools can check, it does mean that it has some tremendous blind spots.

However, those blind spots are only a problem if people aren’t aware or don’t believe that they are there. Then they become huge issues that can lead to both false positives and false negatives.

The Limitations of Plagiarism Detection

Since plagiarism detection tools can only detect copying, or more specifically similar phrases, there are two areas where they are particularly weak.

- Non-Verbatim Plagiarism: Plagiarism that involves the rewriting, translating or otherwise redrafting the text can’t be detected. This can be difficult to get away with as most plagiarism detectors are extremely sensitive, but since plagiarism detectors don’t analyze the content of the work, just the words, it can’t see if you lifted the idea or information if you didn’t also lift the words. This is a common problem in academia, which treats this kind of plagiarism equally as seriously as verbatim plagiarism.

- Common Phrasing/Attributed Use: Second, though many plagiarism checkers will make an attempt to separate out attributed use, given the variety of attribution styles it isn’t always possible. Also, given how common some phrases are in the English language, many plagiarism checkers will report matches that are actually just coincidence.

In short, plagiarism detection tools are just machines and they can make mistakes. However, that is true with any tool as, for example, you don’t discard Microsoft Word because you can make a typo.

Also, like any other tools, plagiarism checkers are useless without humans to use them intelligently, which is the biggest problem such tools have.

The Human Element

The answer to all of this is simple, the decision as to what is and what is not plagiarism should be left to human beings. Humans are the only ones who can detect non-verbatim plagiarism and are the only one who can make determinations about the likelihood that the matches are coincidence and the whether the attribution was adequate or not.

Professors who have a hard rule about papers not being more than X% matching or authors who don’t let others copy more than X number of words before seeking legal action aren’t fighting plagiarism, but are doing more to confuse the issue.

While bright line rules are always tempting because they are easy to remember and follow, with plagiarism, there are few such rules and you can’t turn your judgment over to a machine.

Bottom Line

None of this is meant as a slight to any of these tools. I use all of the tools listed regularly and am grateful for the valuable service they provide. The problem doesn’t lie with the technology, but with those who treat these tools as magical solutions that are capable of making perfect judgments about plagiarism.

They are anything but.

As tempting as it is to turn over our judgment on plagiarism matters to the machines, it simply doesn’t work. Not only will a lot of plagiarism go undetected, but a lot of people will be accused falsely.

Though plagiarism detection tools are a part of the solution, they have to be used in tandem with human judgment and discretion to do any good.

If used correctly, a plagiarism detection service will alert someone to the possibility of plagiarism, not to its actual existence.

Want to Reuse or Republish this Content?

If you want to feature this article in your site, classroom or elsewhere, just let us know! We usually grant permission within 24 hours.